Publications

2026

- WeatherArchive-Bench: Benchmarking Retrieval-Augmented Reasoning for Historical Weather ArchivesYongan Yu, Xianda Du, Qingchen Hu, and 7 more authorsProceedings of the 49th International ACM SIGIR Conference on Research and Development in Information Retrieval (SIGIR 2026), 2026

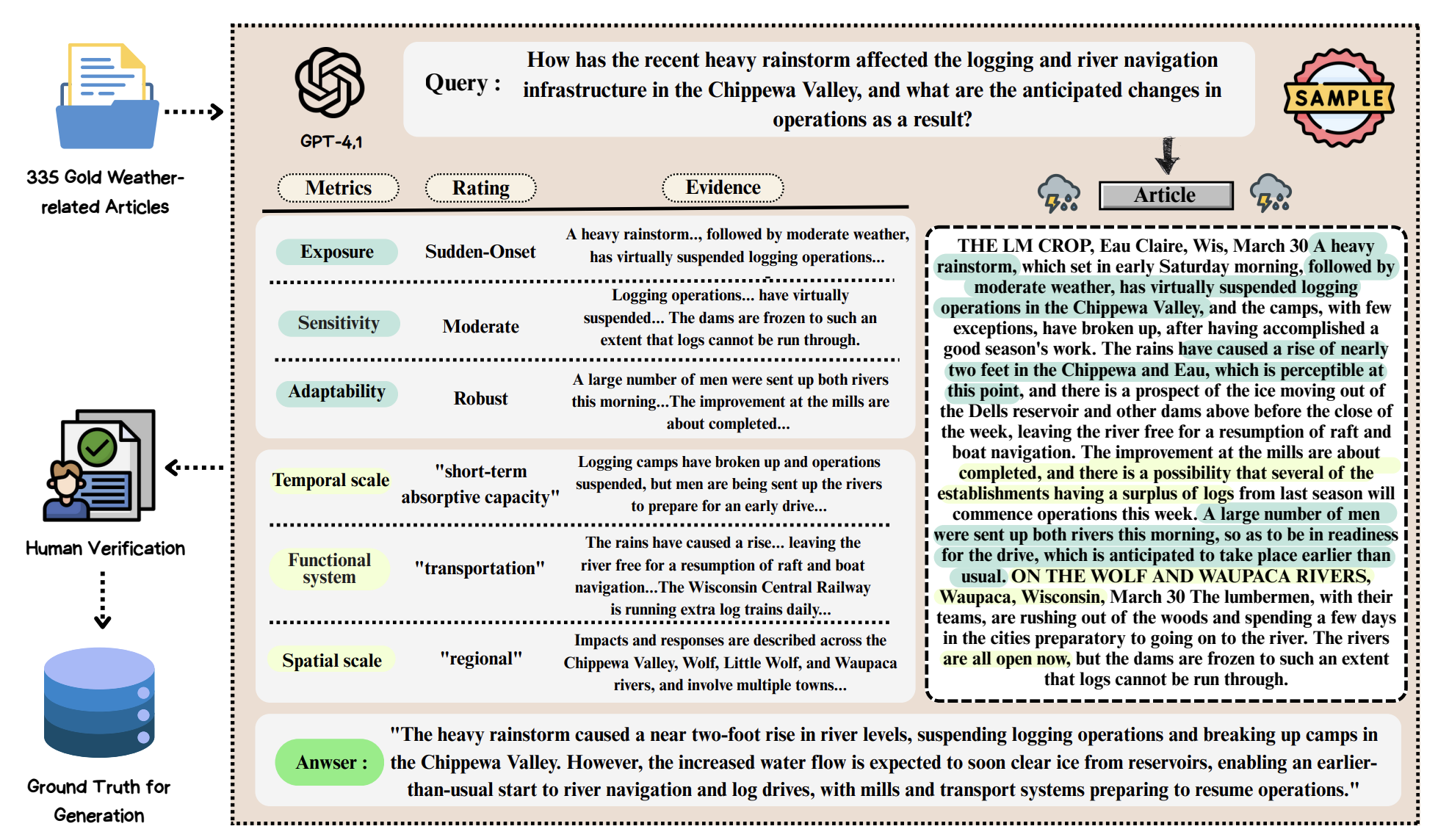

Historical archives on weather events are collections of enduring primary source records that offer rich, untapped narratives of how societies have experienced and responded to extreme weather events. These qualitative accounts provide insights into societal vulnerability and resilience that are largely absent from meteorological records, making them valuable for climate scientists to understand societal responses. However, their vast scale, noisy digitized quality, and archaic language make it difficult to transform them into structured knowledge for climate research. To address this challenge, we introduce WeatherArchive-Bench, the first benchmark for evaluating retrieval-augmented generation (RAG) systems on historical weather archives. WeatherArchive-Bench comprises two tasks: WeatherArchive-Retrieval, which measures a system’s ability to locate historically relevant passages from over one million archival news segments, and WeatherArchive-Assessment, which evaluates whether Large Language Models (LLMs) can classify societal vulnerability and resilience indicators from extreme weather narratives. Extensive experiments across sparse, dense, and re-ranking retrievers, as well as a diverse set of LLMs, reveal that dense retrievers often fail on historical terminology, while LLMs frequently misinterpret vulnerability and resilience concepts. These findings highlight key limitations in reasoning about complex societal indicators and provide insights for designing more robust climate-focused RAG systems from archival contexts.

@article{yu2025weatherarchive, title = {WeatherArchive-Bench: Benchmarking Retrieval-Augmented Reasoning for Historical Weather Archives}, author = {Yu, Yongan and Du, Xianda and Hu, Qingchen and Liang, Jiahao and Ni, Jingwei and Qiang, Dan and Huang, Kaiyu and McKenzie, Grant and Sieber, Renee and Mo, Fengran}, journal = {Proceedings of the 49th International ACM SIGIR Conference on Research and Development in Information Retrieval (SIGIR 2026)}, url = {https://arxiv.org/abs/2510.05336}, year = {2026}, } - THINK: Can Large Language Models Think-aloud?Yongan Yu, Mengqian Wu, Yiran Lin, and 1 more authorInternational Conference on Artificial Intelligence in Education (AIED 2026), 2026

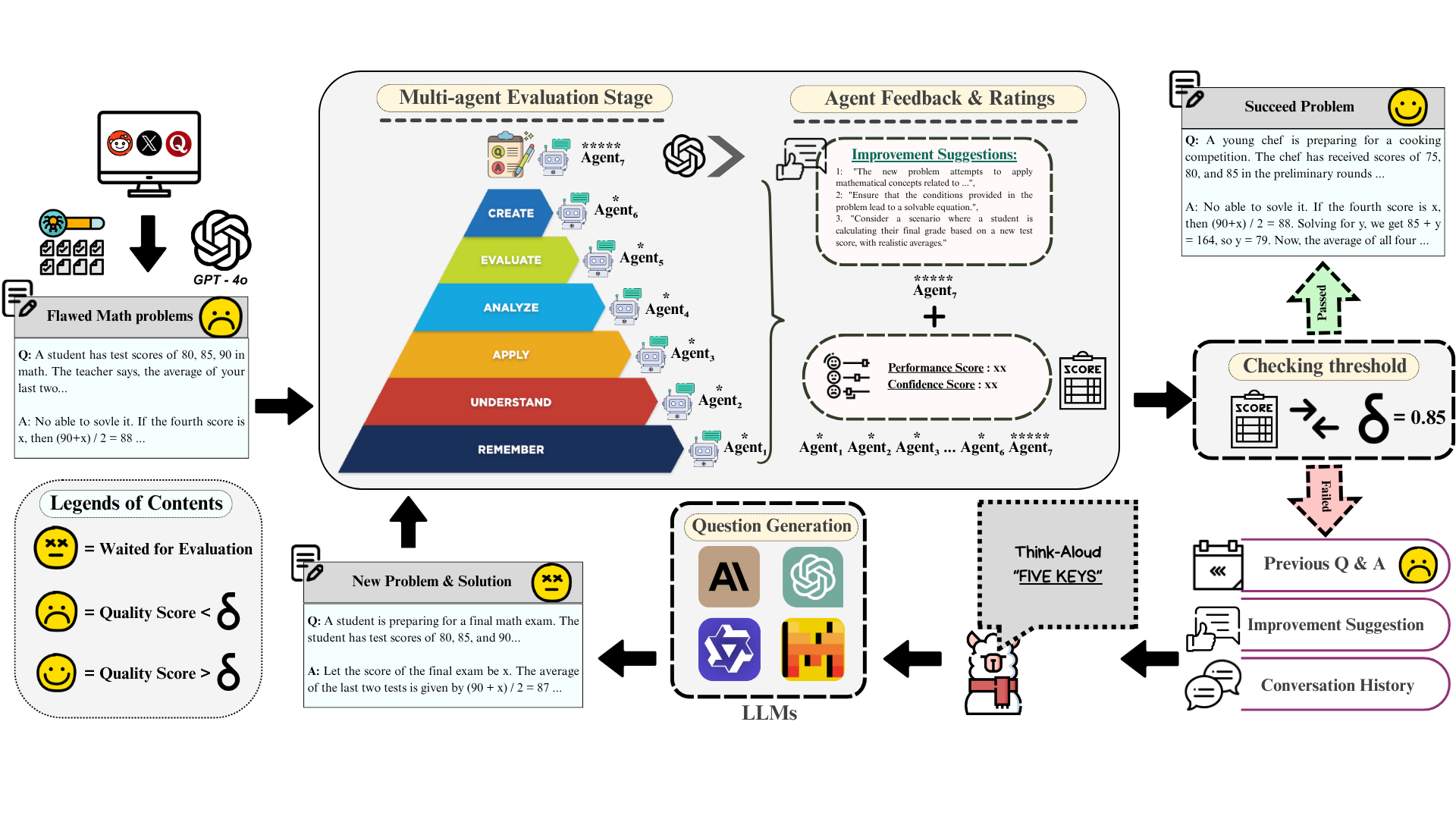

Assessing higher-order thinking skills in large language models (LLMs) remains a fundamental challenge, especially in tasks that go beyond surface-level accuracy. In this work, we propose THiNK (Testing Higher-order Notion of Knowledge), a multi-agent, feedback-driven evaluation framework grounded in Bloom’s Taxonomy. THiNK frames reasoning assessment as an iterative task of problem generation, critique, and revision, encouraging LLMs to think-aloud through step-by-step reflection and refinement. This enables a systematic evaluation of both lower-order (e.g., remember, understand) and higher-order (e.g., evaluate, create) thinking skills. We apply THiNK to seven state-of-the-art LLMs and perform a detailed cognitive analysis of their outputs. Results reveal that while models reliably perform lower-order categories well, they struggle with applying knowledge in realistic contexts and exhibit limited abstraction. Structured feedback loops significantly improve reasoning performance, particularly in higher-order thinking. Qualitative evaluations further confirm that THiNK-guided outputs better align with domain logic and problem structure. The code of our framework provides a scalable methodology for probing and enhancing LLM reasoning, offering new directions for evaluation grounded in learning science, which is available at our GitHub repository.

@article{yu2025think, title = {THINK: Can Large Language Models Think-aloud?}, author = {Yu, Yongan and Wu, Mengqian and Lin, Yiran and Lobczowski, Nikki G}, journal = {International Conference on Artificial Intelligence in Education (AIED 2026)}, url = {https://arxiv.org/abs/2505.20184}, year = {2026}, } - CodeFlowBench: A Multi-turn, Iterative Benchmark for Complex Code GenerationSizhe Wang, Zhengren Wang, Dongsheng Ma, and 5 more authors2026

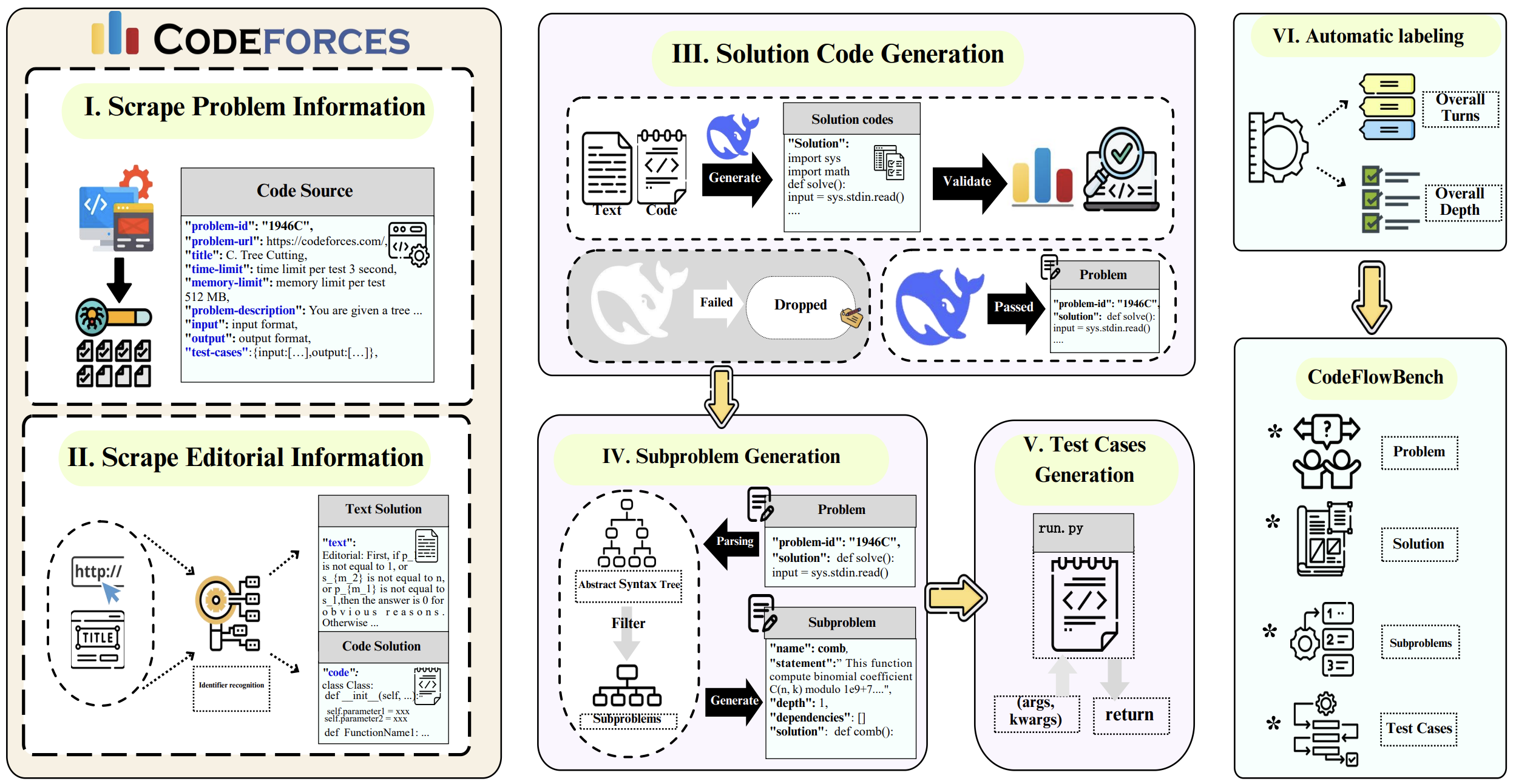

Modern software development demands code that is maintainable, testable, and scalable by organizing the implementation into modular components with iterative reuse of existing codes. We formalize this iterative, multi-turn paradigm as codeflow and introduce CodeFlowBench, the first benchmark designed to comprehensively evaluate LLMs’ ability to perform codeflow, namely implementing new functionality by reusing existing functions over multiple turns. CodeFlowBench comprises 5,258 problems from Codeforces and is continuously updated via an automated pipeline, which decomposes each problem into subproblems with unit tests based on dependency tree analysis and dataflow analysis. We further propose a novel evaluation framework featured dual assessment protocol and structural metrics derived from dependency trees. Extensive experiments on 16 popular LLMs reveal significant performance degradation in multi-turn scenarios. For instance, o1-mini retains only 20.8% Pass@1 in multi-turn scenario versus 37.8% in single-turn scenario. More fine-grained analysis illustrates that model performance inversely correlates with dependency complexity. These findings not only highlight the critical challenges for supporting real-world workflows, but also establish CodeFlowBench as an essential tool for advancing code generation research.

@misc{peking_codeflow, title = {CodeFlowBench: A Multi-turn, Iterative Benchmark for Complex Code Generation}, author = {Wang, Sizhe and Wang, Zhengren and Ma, Dongsheng and Yu, Yongan and Ling, Rui and Li, Zhiyu and Xiong, Feiyu and Zhang, Wentao}, year = {2026}, archiveprefix = {arXiv}, publisher = {arXiv}, primaryclass = {cs.SE}, url = {https://arxiv.org/abs/2504.21751}, journal = {Findings of the Association for Computational Linguistics: ACL 2026} }

2025

- MaintainCoder: Maintainable Code Generation under Dynamic RequirementsZhengren Wang, Rui Ling, Chufan Wang, and 5 more authorsAdvances in Neural Information Processing Systems (NeurIPS 2025), 2025

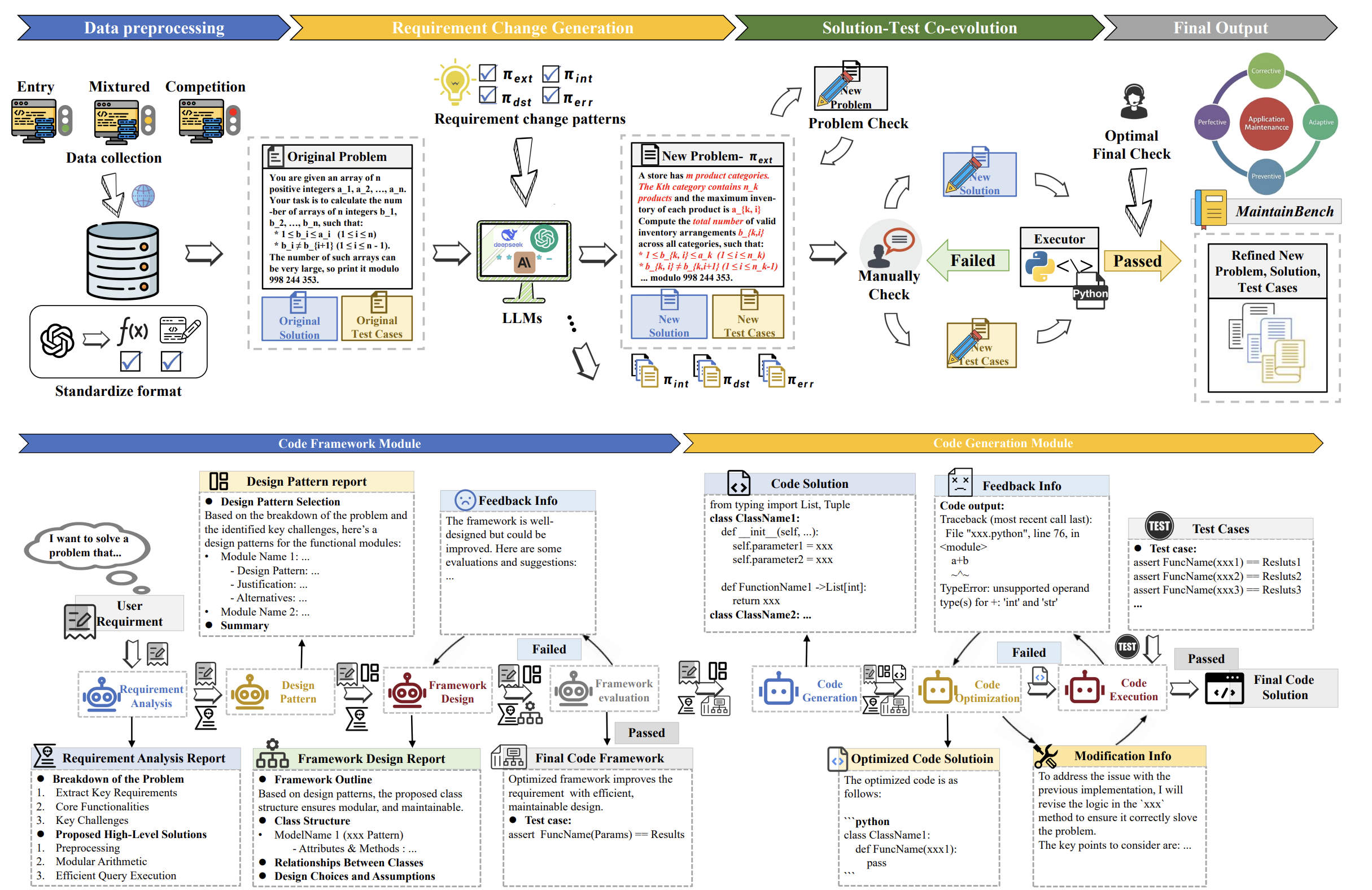

Modern code generation has made significant strides in functional correctness and execution efficiency. However, these systems often overlook a critical dimension in real-world software development: maintainability. To handle dynamic requirements with minimal rework, we propose MaintainCoder as a pioneering solution. It integrates the Waterfall model, design patterns, and multi-agent collaboration to systematically enhance cohesion, reduce coupling, achieving clear responsibility boundaries and better maintainability. We also introduce MaintainCoder, a benchmark comprising requirement changes and novel dynamic metrics on maintenance efforts. Experiments demonstrate that existing code generation methods struggle to meet maintainability standards when requirements evolve. In contrast, MaintainCoder improves dynamic maintainability metrics by more than 60% with even higher correctness of initial codes. Furthermore, while static metrics fail to accurately reflect maintainability and even contradict each other, our proposed dynamic metrics exhibit high consistency. Our work not only provides the foundation for maintainable code generation, but also highlights the need for more realistic and comprehensive code generation research.

@article{wang2025maintaincoder, title = {MaintainCoder: Maintainable Code Generation under Dynamic Requirements}, author = {Wang, Zhengren and Ling, Rui and Wang, Chufan and Yu, Yongan and Wang, Sizhe and Li, Zhiyu and Xiong, Feiyu and Zhang, Wentao}, journal = {Advances in Neural Information Processing Systems (NeurIPS 2025)}, url = {https://neurips.cc/virtual/2025/poster/117862}, year = {2025}, } - MILO: An LLM Multi-Stage Conversational Agent for Fostering Teenagers’ Mental ResilienceHan Bao, Yongan Yu, Bohan Wang, and 2 more authorsIn Adjunct Proceedings of the 38th Annual ACM Symposium on User Interface Software and Technology (UIST 2025), 2025

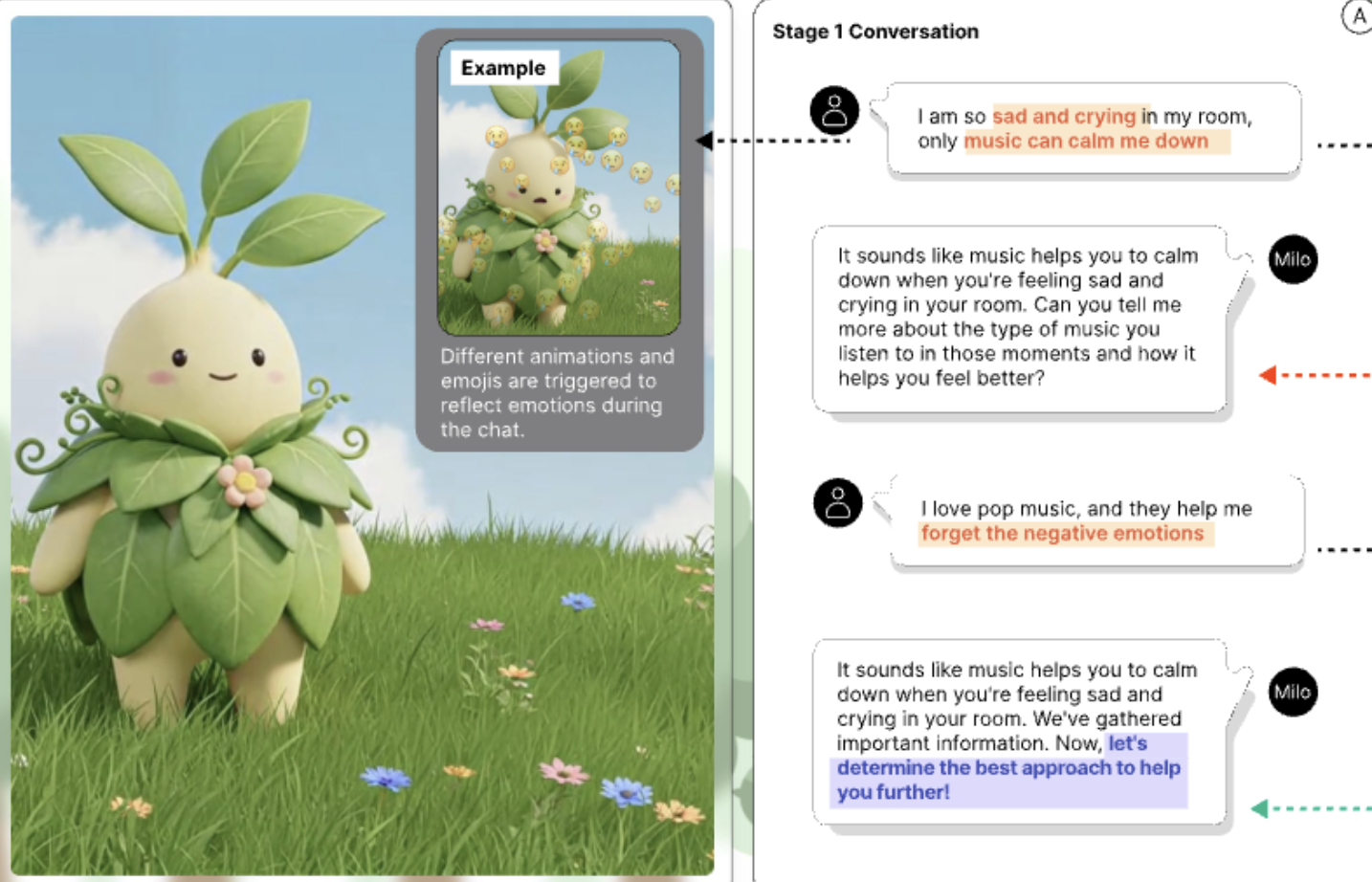

Adolescence is a significant period that shapes long-term development and well-being. Mental disorders contribute to 15% of the global disease burden among teenagers, according to the WHO. Adverse well-being during adolescence can not only compromise physical health but also lead to a wide range of negative social outcomes throughout life. Motivated by the potential of generative AI conversational agents to provide scalable and personalized support to cultivate mental resilience, we designed Milo, an LLM digital companion grounded in cognitive behavioral therapy (CBT), tailored specifically for teenagers. Milo promotes greater involvement of teenagers in the development of emotional awareness and resilience strategies through agent customization and offering an interactive interface.

@inproceedings{bao2025milo, title = {MILO: An LLM Multi-Stage Conversational Agent for Fostering Teenagers' Mental Resilience}, author = {Bao, Han and Yu, Yongan and Wang, Bohan and Lu, Xiaowen and Tong, Xin}, booktitle = {Adjunct Proceedings of the 38th Annual ACM Symposium on User Interface Software and Technology (UIST 2025)}, pages = {1--3}, url = {https://dl.acm.org/doi/full/10.1145/3746058.3758411}, year = {2025}, } - WXImpactBench: A Disruptive Weather Impact Understanding Benchmark for Evaluating Large Language ModelsYongan Yu, Qingchen Hu, Xianda Du, and 3 more authorsIn Findings of the Association for Computational Linguistics: ACL 2025, 2025

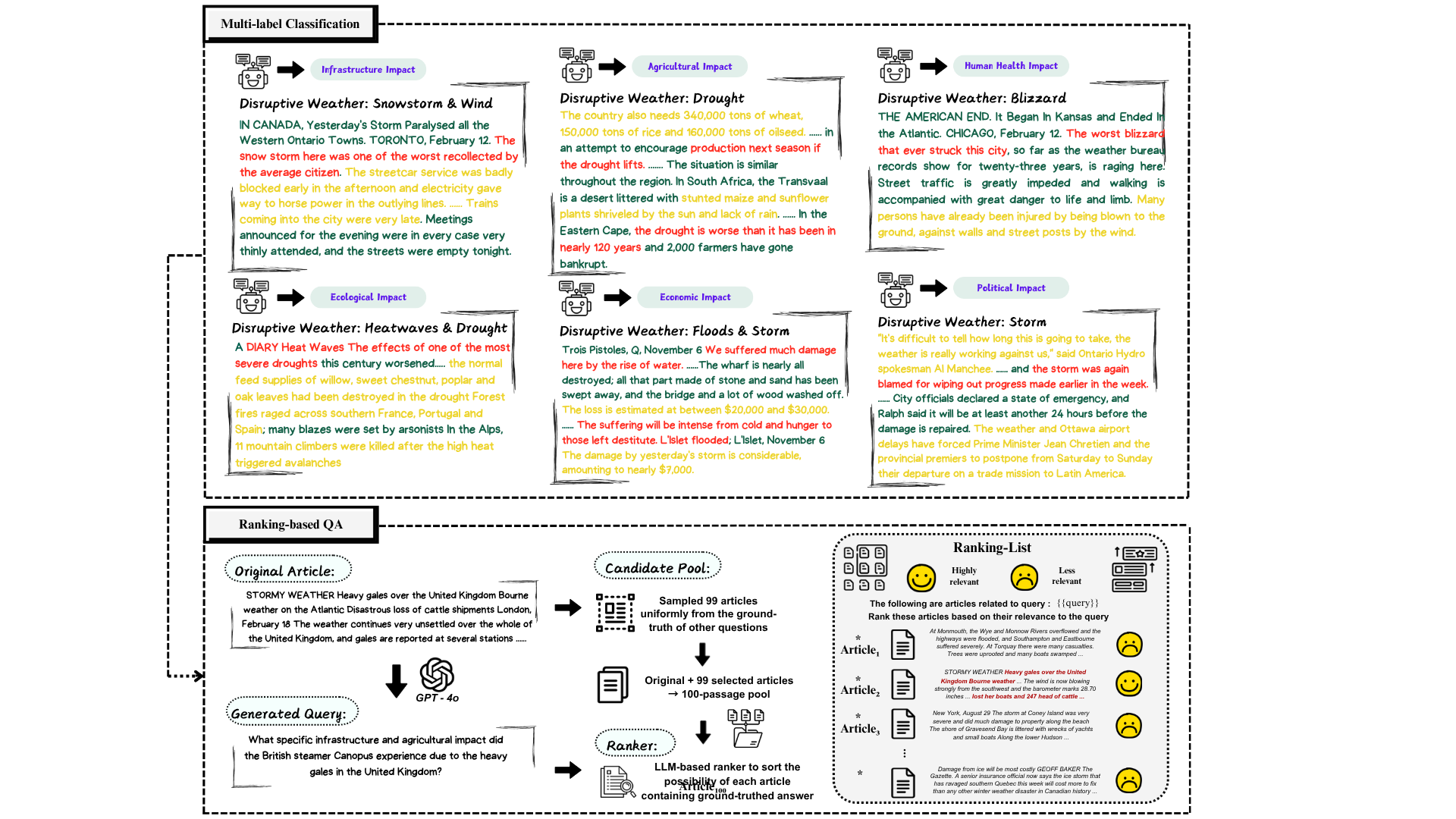

Climate change adaptation requires the understanding of disruptive weather impacts on society, where large language models (LLMs) might be applicable. However, their effectiveness is under-explored due to the difficulty of high-quality corpus collection and the lack of available benchmarks. The climate-related events stored in regional newspapers record how communities adapted and recovered from disasters. However, the processing of the original corpus is non-trivial. In this study, we first develop a disruptive weather impact dataset with a four-stage well-crafted construction pipeline. Then, we propose WXImpactBench, the first benchmark for evaluating the capacity of LLMs on disruptive weather impacts. The benchmark involves two evaluation tasks, multi-label classification and ranking-based question answering. Extensive experiments on evaluating a set of LLMs provide first-hand analysis of the challenges in developing disruptive weather impact understanding and climate change adaptation systems. The constructed dataset and the code for the evaluation framework are available to help society protect against vulnerabilities from disasters.

@inproceedings{yu-etal-2025-wximpactbench, title = {{WXI}mpact{B}ench: A Disruptive Weather Impact Understanding Benchmark for Evaluating Large Language Models}, author = {Yu, Yongan and Hu, Qingchen and Du, Xianda and Wang, Jiayin and Mo, Fengran and Sieber, Ren{\'e}e}, editor = {Che, Wanxiang and Nabende, Joyce and Shutova, Ekaterina and Pilehvar, Mohammad Taher}, booktitle = {Findings of the Association for Computational Linguistics: ACL 2025}, address = {Vienna, Austria}, publisher = {Association for Computational Linguistics}, url = {https://aclanthology.org/2025.findings-acl.207/}, pages = {4016--4035}, isbn = {979-8-89176-256-5}, year = {2025}, } - From Recall to Reasoning: Automated Question Generation for Deeper Math Learning through Large Language ModelsYongan Yu, Alexandre Krantz, and Nikki G LobczowskiIn International Conference on Artificial Intelligence in Education (AIED 2025), 2025

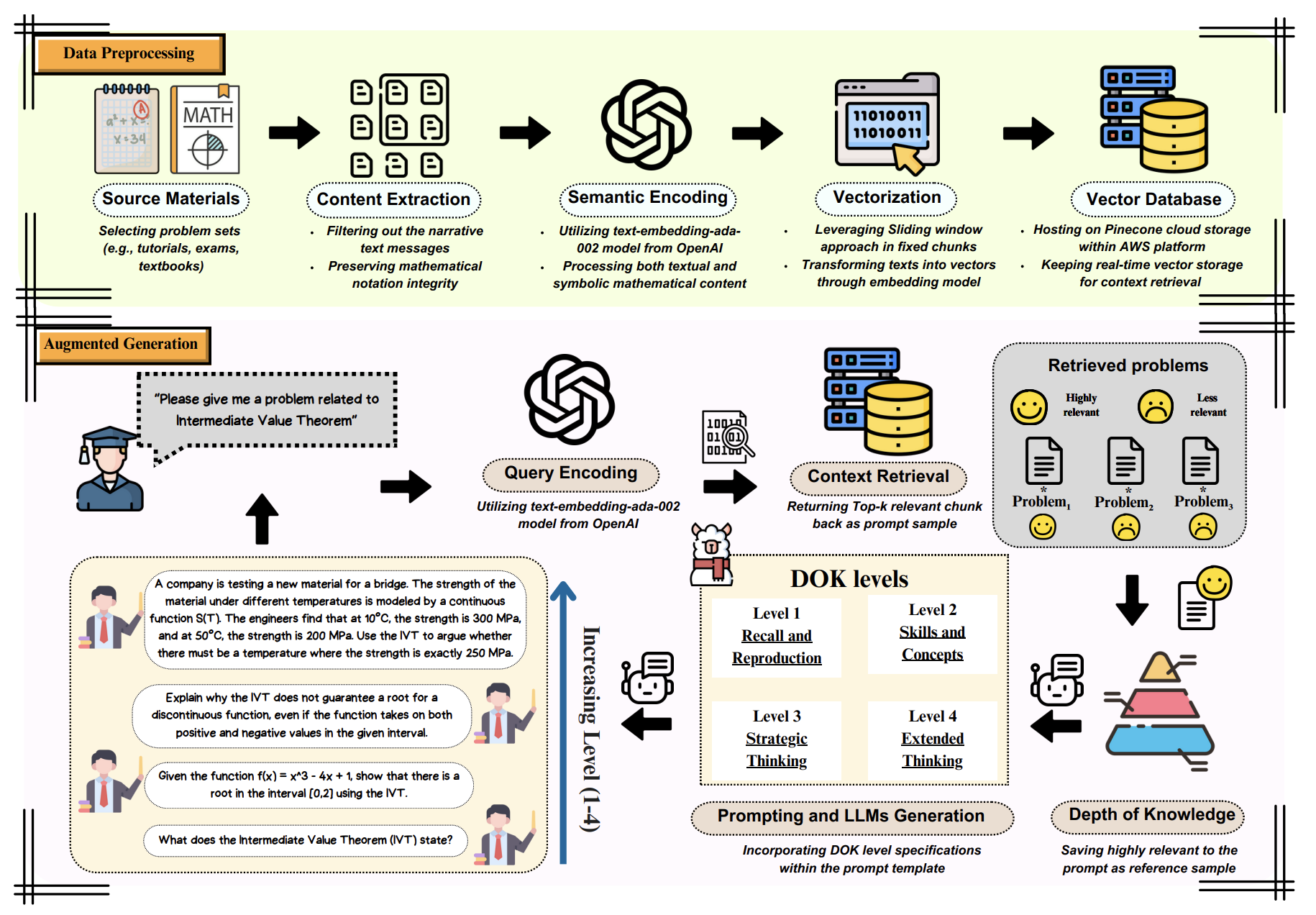

Educators have started to turn to Generative AI (GenAI) to help create new course content, but little is known about how they should do so. In this project, we investigated the first steps for optimizing content creation for advanced math. In particular, we looked at the ability of GenAI to produce high-quality practice problems that are relevant to the course content. We conducted two studies to: (1) explore the capabilities of current versions of publicly available GenAI and (2) develop an improved framework to address the limitations we found. Our results showed that GenAI can create math problems at various levels of quality with minimal support, but that providing examples and relevant content results in better quality outputs. This research can help educators decide the ideal way to adopt GenAI in their workflows, to create more effective educational experiences for students.

@inproceedings{yu2025recall, title = {From Recall to Reasoning: Automated Question Generation for Deeper Math Learning through Large Language Models}, author = {Yu, Yongan and Krantz, Alexandre and Lobczowski, Nikki G}, booktitle = {International Conference on Artificial Intelligence in Education (AIED 2025)}, pages = {414--422}, url = {https://link.springer.com/chapter/10.1007/978-3-031-98462-4_52}, organization = {Springer}, year = {2025}, }